How well does an RTX 4090 run Qwen3.6-27B?

I asked one question: how well does an RTX 4090 run Qwen3.6-27B?

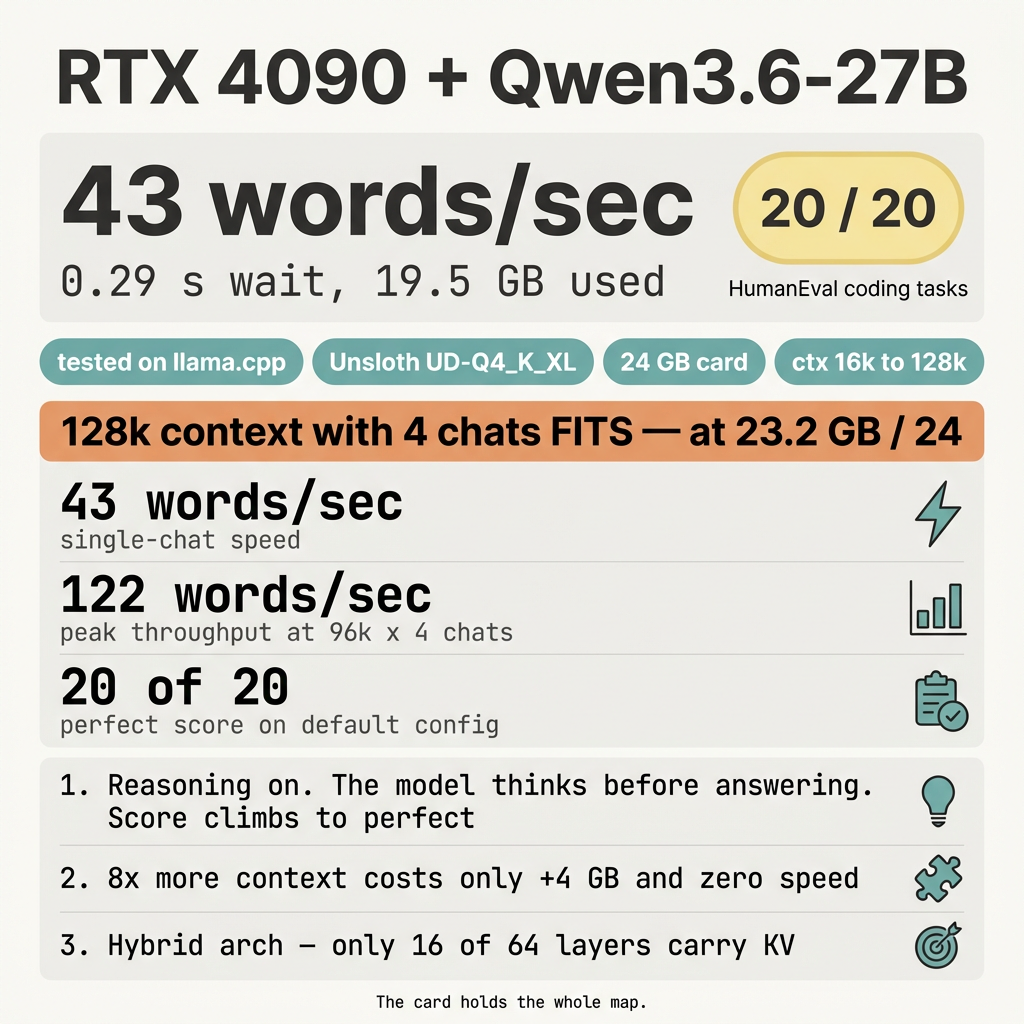

43 words a second. About a third of a second to wait. 20 of 20 coding problems solved. 19.5 GB of memory used out of 24.

The card runs this AI very well.

The Hybrid Free-Lunch Rule

Most AIs slow down hard when you give them more to read at once. This one does not.

I tested 5 reading-window sizes (16k up to 128k tokens) and 3 numbers of chats at the same time (1, 2, 4). All 15 setups ran. The biggest setup — 128k window with 4 parallel chats — fit on the 24 GB card with 0.8 GB to spare. Speed stayed the same.

Qwen3.6-27B uses a hybrid design where only 16 of its 64 layers carry a memory of past tokens. The other 48 are state-space layers that don’t need that memory. Growing the window grows a small part of the model, not the whole thing.

That’s the rule: on a hybrid model, long context is almost free.

What the words mean

- The AI: Qwen3.6-27B. The newest free, open AI in this size class. Reasoning-tuned. Strong at code.

- The card: RTX 4090. 24 GB of fast memory.

- The software: llama.cpp /

llama-server. Free, open. The numbers in this article come from that server. Other software (vLLM, Ollama, LM Studio) gives different numbers. - Quantisation: UD-Q4_K_XL from Unsloth. A “dynamic” 4-bit format. About 1 GB bigger than plain Q4_K_M. Buys back a bit of accuracy.

- Context window: how much the AI can read at once.

- Parallel chats: how many people can talk to it at the same time.

- Tokens per second: how fast the AI types. 43 tokens a second is about 30 English words a second.

- TTFT: the wait before the first letter shows up.

- pass@1: the AI writes code, the test passes on the first try. 20 of 20 = perfect.

The basic numbers

| What | Result |

|---|---|

| Speed (single chat) | 43 words/sec |

| Wait before first letter | 0.29 s |

| Correct answers (out of 20) | 20 |

| Card memory used | 19.5 GB out of 24 |

| AI file size on disk | ~17 GB |

20 questions came from a public coding test (HumanEval). Reasoning mode is on, so the AI thinks before answering. That makes its answers longer but more accurate. The score of 20 of 20 confirms it.

The full matrix — context × parallel chats

5 context sizes × 3 parallelism settings = 15 setups. Every single one ran. None crashed.

Words per second (all chats added together):

| ctx ↓ \ chats → | 1 chat | 2 chats | 4 chats |

|---|---|---|---|

| 16k | 43 | 72 | 122 |

| 32k | 43 | 74 | 97 |

| 64k | 43 | 73 | 100 |

| 96k | 43 | 74 | 122 |

| 128k | 43 | 75 | 97 |

Memory used out of 24 GB:

| ctx ↓ \ chats → | 1 chat | 2 chats | 4 chats |

|---|---|---|---|

| 16k | 18.9 GB | 19.1 GB | 19.4 GB |

| 32k | 19.5 GB | 19.6 GB | 19.9 GB |

| 64k | 20.6 GB | 20.7 GB | 21.0 GB |

| 96k | 21.6 GB | 21.8 GB | 22.1 GB |

| 128k | 22.7 GB | 22.9 GB | 23.2 GB |

Three things that surprise people

1. Eight times more context costs almost nothing

Going from 16k to 128k is eight times more reading. The cost: about 4 GB extra memory and zero speed loss.

2. The card runs out of math, not memory

At 128k with 4 chats, the card uses 23.2 GB. It still has 0.8 GB free — tight, but inside the budget. The bottleneck is the math part, not the memory part. Adding a fifth chat would split the same throughput pie thinner without making it bigger.

3. Quality stays high across all 15 setups

pass@1 lands between 0.90 and 1.00 across the matrix. No slow drop with longer context. The variation is sampling noise from the model’s temperature, not a context problem.

What to do

- One person, normal use: 32k context, 1 chat. Cheap, fast, easy.

- Hermes-agent / voice agent / long reasoning loop: 64k single chat.

- Whole codebase or long research: 128k single chat. Same speed.

- Multi-agent fan-out or batch jobs: 96k × 4 chats. Peaks at 122 words a second.

- Always turn on

--flash-attn onandq8_0cache. - Keep reasoning on for code work. The score climbs from “good” to “perfect.”

Configs you can copy

llama-server \

--model ~/.cache/hf-direct/qwen3.6-27b/Qwen3.6-27B-UD-Q4_K_XL.gguf \

--mmproj ~/.cache/hf-direct/qwen3.6-27b/mmproj-F16.gguf \

--alias qwen36 \

--host 0.0.0.0 --port 10003 \

--n-gpu-layers 999 \

--ctx-size 32768 \

--parallel 1 \

--jinja \

--reasoning on --reasoning-format none \

--temp 0.6 --top-p 0.95 --top-k 20 --min-p 0.0 \

--repeat-penalty 1.0 \

--cache-type-k q8_0 --cache-type-v q8_0 \

--flash-attn on \

--metrics

Big-context: change --ctx-size 32768 to --ctx-size 131072.

Multi-agent: also add --parallel 4.

Maxim

On a hybrid AI, long context is almost free. The card holds the whole map.

Recap

- An RTX 4090 runs Qwen3.6-27B at 43 words a second.

- 0.29 seconds before the first letter.

- 20 of 20 coding questions correct.

- 19.5 GB used at 32k. 23.2 GB at 128k × 4 chats.

- Hybrid Mamba/Attention design makes long context cheap.

- Quality stays high across all 15 tested setups.

- Peak throughput: 122 words a second at 96k × 4 (or 16k × 4).

- The card runs this AI very well.