The right context window for the right job

How big should an AI’s reading window be?

Most people pick the biggest number they can. That is wrong.

Bigger windows cost more money. They are slower. And past a certain size they are also less accurate.

Here is what scientists have found, what each kind of work actually needs, and how to pick the right size every time.

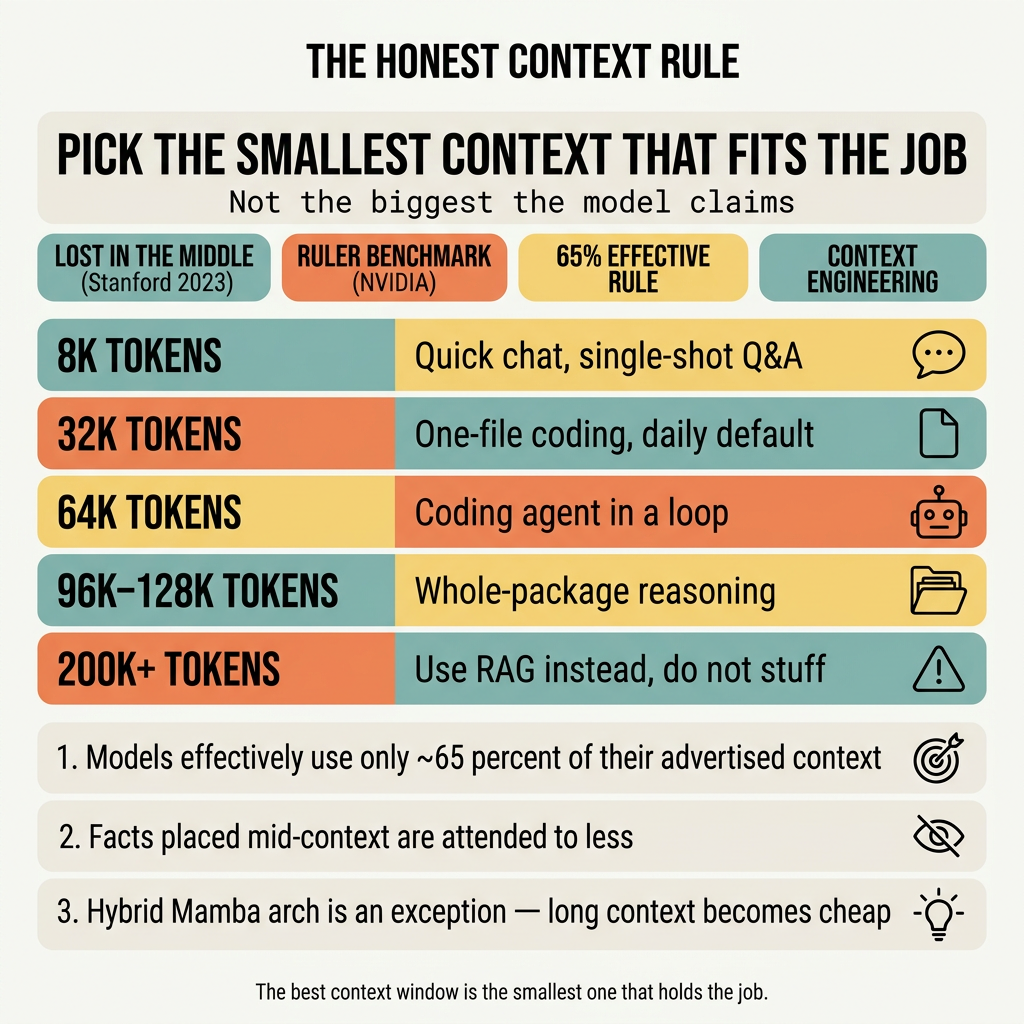

The Honest Context Rule

Pick the smallest window that fits the work. Not the biggest the AI brand claims.

Three reasons.

1. The advertised number lies. A model that says “128k context” usually only thinks clearly up to about 32k. Past that, accuracy drops. NVIDIA tested this with their RULER benchmark and almost every model they tried failed past their advertised number.

2. Bigger windows cost real money. Twice the window means twice the memory used. On a paid AI service, your bill doubles. On your own GPU, your VRAM fills up.

3. Bigger windows can drop quality. A 2023 Stanford paper called “Lost in the Middle” showed that AIs pay less attention to facts placed in the middle of a long window. A clue at token #5,000 of a 100k window can be ignored even if it is the answer.

The fastest, cheapest, most accurate setting is rarely the biggest one.

What is a context window, in plain words?

Imagine the AI as a person reading a stack of paper before answering you.

- The context window is how much paper the person can hold at once.

- A token is roughly half a word. 100 tokens = about 75 English words.

- 8k tokens = about 6,000 words = a long magazine article.

- 32k tokens = about 24,000 words = a short story.

- 128k tokens = about 96,000 words = a small novel.

Now imagine the person can read fast at the start, fast at the end, and gets sleepy in the middle. That is a real attention pattern in modern AIs. It is called “lost in the middle.”

What two studies actually found

Two papers shaped how serious people think about context size.

Lost in the Middle (Stanford, 2023)

Researchers gave AIs long passages with one fact buried inside, then asked questions about that fact. They moved the fact around to different positions.

Result: accuracy was highest when the fact was at the start or end of the window. When the fact was in the middle, accuracy dropped by more than 30 percent.

The reason is technical but the takeaway is simple: do not paste a 50,000-word document and assume the AI reads it like a human. Important stuff goes at the top or the bottom.

RULER (NVIDIA, 2024)

NVIDIA built a test that measures the real working window of any AI versus the size it advertises.

Result: most AIs advertise much more than they can actually use. A model card might say “128k context.” RULER’s truth: clear thinking only up to 32k - 64k for most models. Past 64k they miss facts, make things up, or give answers based on text from earlier in the window.

The general rule from the data: plan for about 65 percent of the advertised window as your reliable working capacity.

What different jobs actually need

Real work, not benchmarks.

| Job | Window that fits | Why |

|---|---|---|

| Quick question, single answer | 4k - 8k | Question + answer fits. More is wasted. |

| One file, one task | 16k | A 500-line file + your question + the model’s reply. |

| One real file with imports + a small test | 32k | Today’s coding sweet spot. Cheap, fast, accurate. |

| Multi-file fix inside one folder | 32k - 64k | A handful of files + your prompt + the model’s thinking. |

| Coding agent in a loop | 64k | The right default. Holds multiple turns of history, tool output, file diffs. |

| Long agent session, lots of tool calls | 64k - 96k | When the agent needs an hour of conversation history. |

| Reading a whole package or paper | 96k - 128k | Whole-repo reasoning. Quality already dropping in this range. |

| ”Drop the whole codebase in” | Probably wrong | Use RAG (search and fetch) instead. Bigger is not the answer. |

Why a coding agent specifically wants 64k

A coding agent eats window space fast in three ways at once.

Files it loads. A typical TypeScript file is 1.5k - 4k tokens. A package’s src/ folder is 30k - 80k. Even a “small” task can pull in 5-10k of supporting code.

Its own thinking. Reasoning models produce 1k - 2k tokens of internal “thinking” before every answer. Multiple turns multiply that.

Tool results. Test output, command output, file diffs — all live in the window until the agent clears them.

At 32k the agent hits “window full” mid-session. At 64k it barely notices. At 96k you are paying for headroom you rarely use.

What every extra token costs you

Every token you add to the window costs three things.

Memory. Most AIs keep a “key-value cache” for every token they have ever read. Doubling the window doubles this cache. On a paid AI service, that shows up as a higher bill. On a local GPU, you watch the VRAM fill.

Speed. The wait before the AI starts answering grows roughly with the square of the window. An AI that answers in 0.3 seconds at 4k can take 5+ seconds at 128k just to start writing.

Money. Most paid services charge per input token. A query with 100,000 tokens of context is 100x more expensive than the same query with 1,000. If a 1k window gives the same answer, you just paid 100x for nothing.

A few newer AI designs (hybrid Mamba-Attention models, sparse-attention models) charge much less for long context. But for the vast majority of models, every extra token is paid in cash, latency, and accuracy.

What about RAG?

RAG (Retrieval-Augmented Generation) is the alternative to “stuff everything in the window.”

Pattern: split your documents into small chunks. Save them in a search database. When a question comes in, search for the chunks most likely to contain the answer. Paste only those chunks into the AI.

Use RAG when:

- Your knowledge source is bigger than 200k tokens.

- The relevant fact is one paragraph buried in 100 pages.

- You want answers grounded in cited sources.

- You want to add new documents without retraining.

Stuff into the window when:

- The whole source fits in 64k.

- The AI needs to reason across the whole document, not just one chunk.

- The task is creative writing or refactoring, not factual lookup.

- Order or layout matters.

Rule of thumb: under 64k, paste it. Over 200k, use RAG. In between, measure both.

What to do

- Daily chat or a single question? Use 8k. Tiny, instant. Costs almost nothing.

- One file, no dependencies, one short answer? Use 16k. Just enough room for the file plus the model’s reply.

- Working on one real file with imports + a small test? Use 32k. The cheap default for 80 percent of coding work.

- Running a coding agent in a loop? Use 64k. The right default for 2026.

- Reading a small book or whole package? Use 96k. Past this, returns drop fast.

- Anything bigger? Stop and switch to RAG. Stuffing the whole codebase in a 200k window is the wrong tool.

- Always put the most important context at the start or end of the window. Never in the middle.

Pro tips

- A reasoning model burns 1k - 2k tokens of “thinking” per turn. Plan for it.

- “Bigger context = smarter answer” is wrong past about 64k for most models.

- If your AI keeps forgetting facts you gave it, the facts are probably stuck in the middle of the window. Move them to the top or bottom.

- The newest hybrid Mamba-Attention models are an exception: they pay almost nothing for longer context. If you are running one of those locally, the rule loosens.

Maxim

The best context window is the smallest one that holds the job.

Recap

- Most AIs effectively use only about 65 percent of the window they advertise.

- “Lost in the middle” means facts placed mid-window are attended to less.

- Daily coding fits in 32k. Coding agents fit in 64k. Almost no real job needs 128k.

- Past 200k, switch to RAG. Stuffing the whole codebase into the window is the wrong tool.

- A few new architectures lower the cost of long context, but the rule still holds.

- The best context window is the smallest one that holds the job.

Sources

- Liu et al., Lost in the Middle: How Language Models Use Long Contexts (Stanford, 2023)

- NVIDIA, RULER: What’s the Real Context Size of Your Long-Context Language Models? (2024, COLM)

- Anthropic, Effective context engineering for AI agents

- Morph, LLM Context Window Comparison 2026

- ByteByteGo, A Guide to Context Engineering for LLMs